README.md

OpenTelemetry Collector Testbed

Testbed is a controlled environment and tools for conducting end-to-end tests for the Otel Collector, including reproducible short-term benchmarks, correctness tests, long-running stability tests and maximum load stress tests.

Usage

For each type of tests that should have a summary report create a new directory and then a test suite function which utilizes *testing.M. This function should delegate all functionality to testbed.DoTestMain supplying a global instance of testbed.TestResultsSummary to it.

Each test case within the suite should create a testbed.TestCase and supply implementations of each of the various interfaces the NewTestCase function takes as parameters.

DataFlow

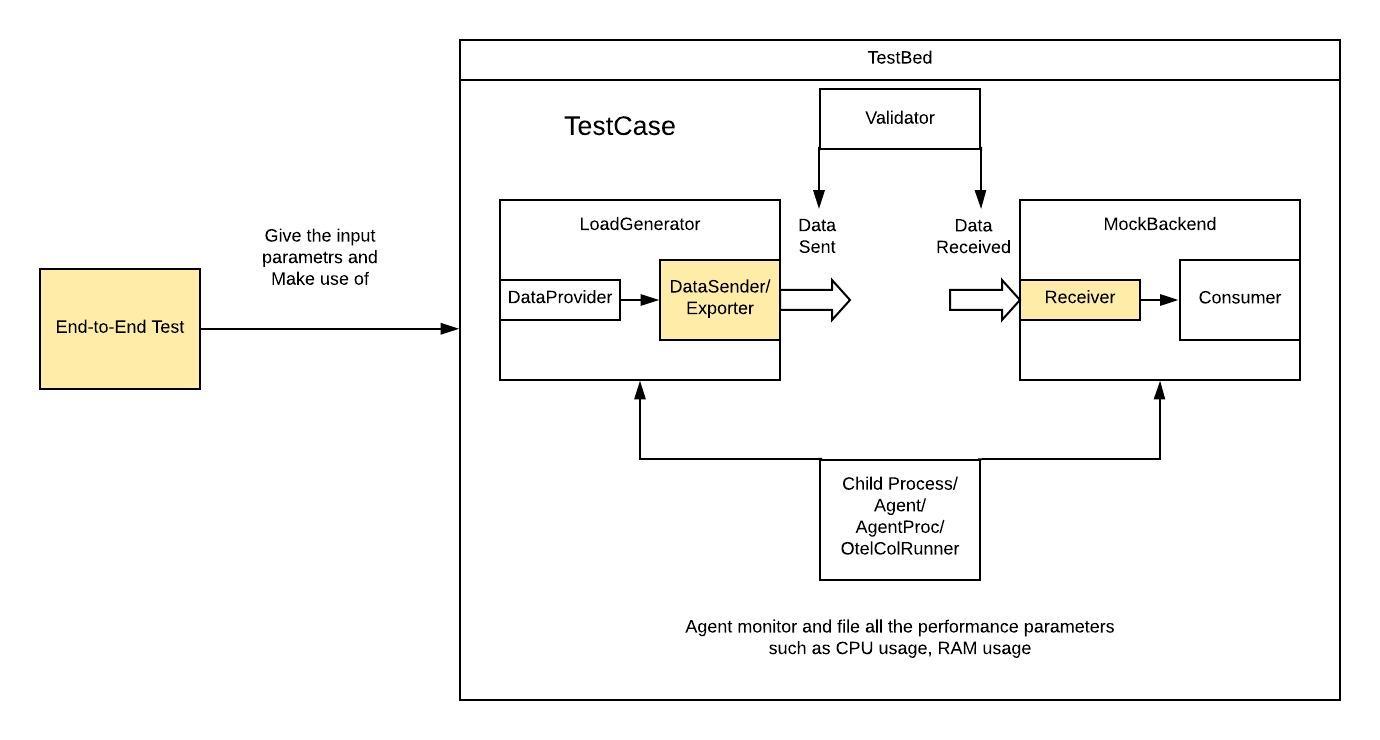

testbed.TestCase uses LoadGenerator and MockBackend to further encapsulate pluggable components. LoadGenerator further encapsulates DataProvider and DataSender in order to generate and send data. MockBackend further encapsulate DataReceiver and provide consume functionality.

For instance, if using the existing end-to-end test, the general dataflow can be (Note that MockBackend does not really have a consumer instance, only to make it intuitive, this diagram draws it a separate module):

Pluggable Test Components

DataProvider- Generates test data to send to receiver under test.PerfTestDataProvider- Implementation of theDataProviderfor use in performance tests. Tracing IDs are based on the incremented batch and data items counters.GoldenDataProvider- Implementation ofDataProviderfor use in correctness tests. Provides data from the "Golden" dataset generated using pairwise combinatorial testing techniques.

DataSender- Sends data to the collector instance under test.JaegerGRPCDataSender- Implementation ofDataSenderwhich sends tojaegerreceiver.OCTraceDataSender- Implementation ofDataSenderwhich sends toopencensusreceiver.OCMetricsDataSender- Implementation ofDataSenderwhich sends toopencensusreceiver.OTLPTraceDataSender- Implementation ofDataSenderwhich sends tootlpreceiver.OTLPMetricsDataSender- Implementation ofDataSenderwhich sends tootlpreceiver.ZipkinDataSender- Implementation ofDataSenderwhich sends tozipkinreceiver.

DataReceiver- Receives data from the collector instance under test and stores it for use in test assertions.OCDataReceiver- Implementation ofDataReceiverwhich receives data fromopencensusexporter.JaegerDataReceiver- Implementation ofDataReceiverwhich receives data fromjaegerexporter.OTLPDataReceiver- Implementation ofDataReceiverwhich receives data fromotlpexporter.ZipkinDataReceiver- Implementation ofDataReceiverwhich receives data fromzipkinexporter.

OtelcolRunner- Configures, starts and stops one or more instances of otelcol which will be the subject of testing being executed.ChildProcess- Implementation ofOtelcolRunnerruns a single otelcol as a child process on the same machine as the test executor.InProcessCollector- Implementation ofOtelcolRunnerruns a single otelcol as a go routine within the same process as the test executor.

TestCaseValidator- Validates and reports on test results.PerfTestValidator- Implementation ofTestCaseValidatorfor test suites usingPerformanceResultsfor summarizing results.CorrectnessTestValidator- Implementation ofTestCaseValidatorfor test suites usingCorrectnessResultsfor summarizing results.

TestResultsSummary- Records itemized test case results plus a summary of one category of testing.PerformanceResults- Implementation ofTestResultsSummarywith fields suitable for reporting performance test results.CorrectnessResults- Implementation ofTestResultsSummarywith fields suitable for reporting data translation correctness test results.

Adding New Receiver and/or Exporters to the testbed

Generally, when designing a test for new exporter and receiver components, developers should mainly focus on designing and implementing the components with yellow background in the diagram above as the other components are implemented by the testbed framework:

-

DataSender- This part should provide below interfaces for testing purpose:Start()- Start sender and connect to the configured endpoint. Must be called before sending data.Flush()- Send any accumulated data.GetCollectorPort()- Return the port to which this sender will send data.GenConfigYAMLStr()- Generate a config string to place in receiver part of collector config so that it can receive data from this sender.ProtocolName()- Return protocol name to use in collector config pipeline.

-

DataReceiver- This part should provide below interfaces for testing purpose:Start()- Start receiver.Stop()- Stop receiver.GenConfigYAMLStr()- Generate a config string to place in exporter part of collector config so that it can send data to this receiver.ProtocolName()- Return protocol name to use in collector config pipeline.

-

Testing- This part may vary from what kind of testing developers would like to do. In existing implementation, we can refer to End-to-End testing, Metrics testing, Traces testing, Correctness Traces testing, and Correctness Metrics testing. For instance, if developers would like to design a trace test for a new exporter and receiver:-

func TestTrace10kSPS(t *testing.T) { tests := []struct { name string sender testbed.DataSender receiver testbed.DataReceiver resourceSpec testbed.ResourceSpec }{ { "NewExporterOrReceiver", testbed.NewXXXDataSender(testbed.DefaultHost, testbed.GetAvailablePort(t)), testbed.NewXXXDataReceiver(testbed.GetAvailablePort(t)), testbed.ResourceSpec{ ExpectedMaxCPU: XX, ExpectedMaxRAM: XX, }, }, ... } processors := map[string]string{ "batch": ` batch: `, } for _, test := range tests { t.Run(test.name, func(t *testing.T) { Scenario10kItemsPerSecond( t, test.sender, test.receiver, test.resourceSpec, performanceResultsSummary, processors, ) }) } }

-

Run Tests and Get Results

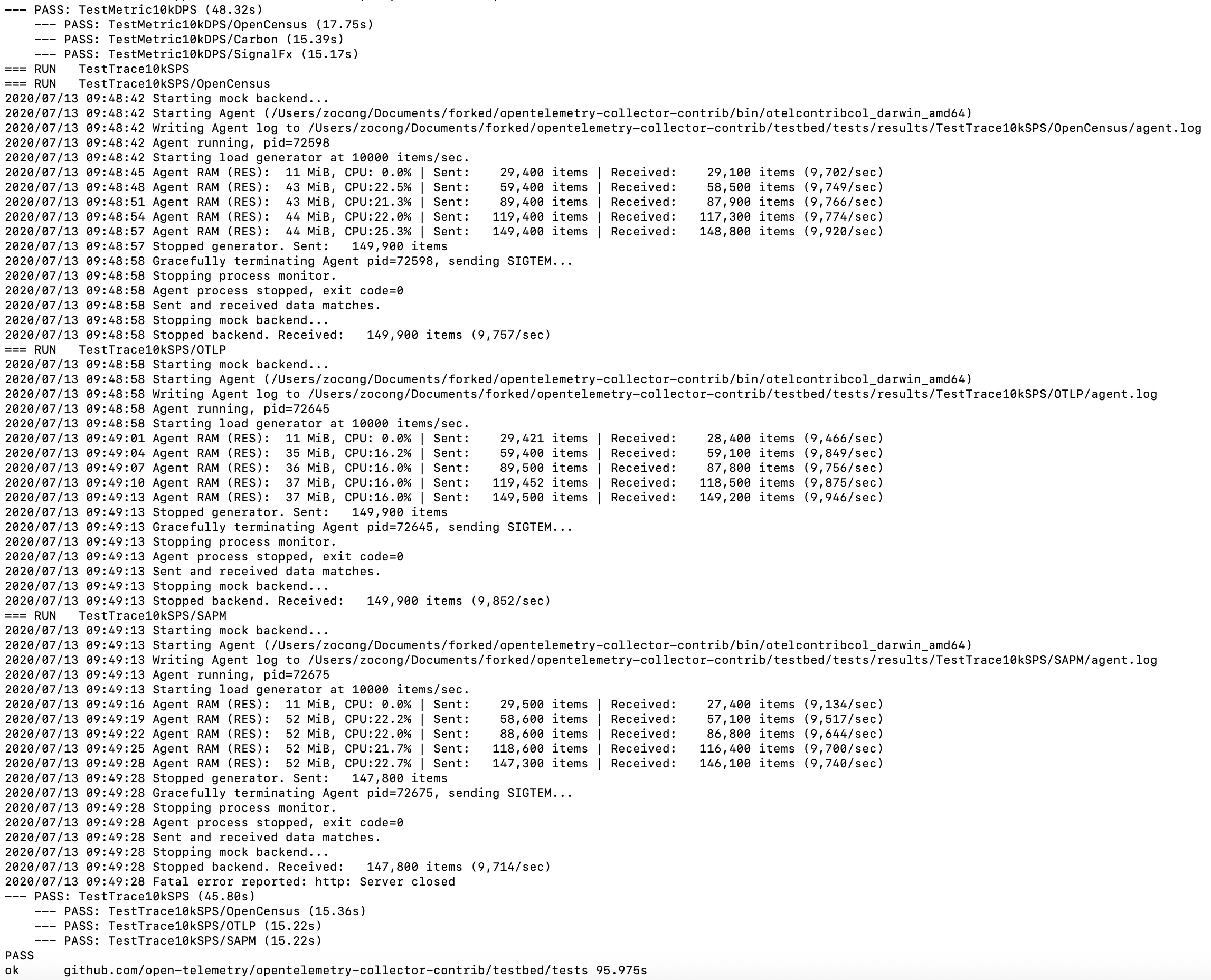

Here providing some examples of how to run and get the results of testing.

- Under the collector-contrib repo, the following runs all the tests:

cd ./testbed

./runtests.sh

Then get the result:

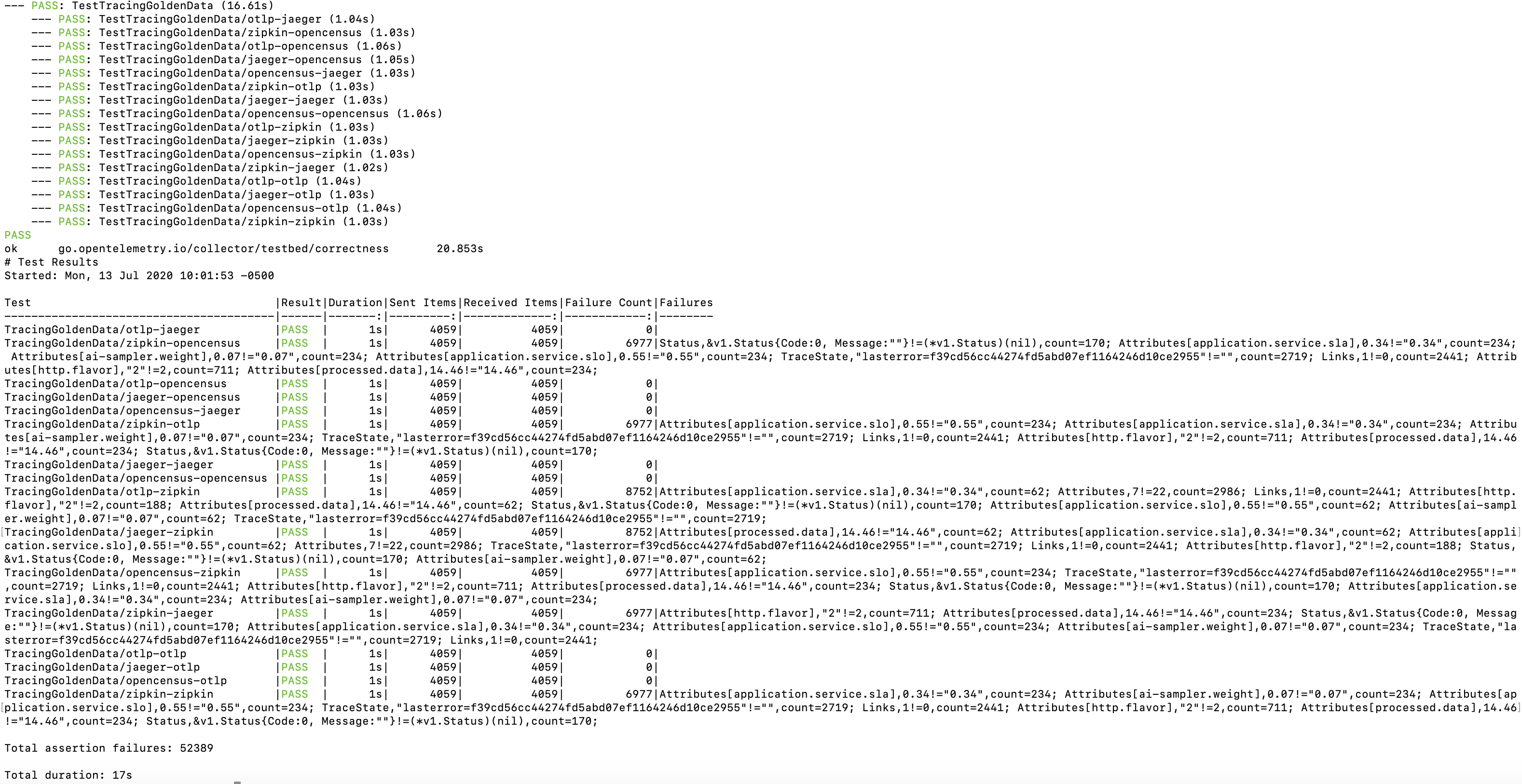

- Under the collector-contrib repo, the following runs the correctness tests only:

cd ./testbed

TESTS_DIR=correctnesstests/metrics ./runtests.sh

Then get the result: