closes https://github.com/apache/airflow/issues/13697 |

||

|---|---|---|

| .github | ||

| airflow | ||

| chart | ||

| clients | ||

| dags | ||

| dev | ||

| docker-context-files | ||

| docs | ||

| empty | ||

| hooks | ||

| images | ||

| kubernetes_tests | ||

| license-templates | ||

| licenses | ||

| manifests | ||

| metastore_browser | ||

| provider_packages | ||

| scripts | ||

| tests | ||

| .asf.yaml | ||

| .bash_completion | ||

| .coveragerc | ||

| .dockerignore | ||

| .editorconfig | ||

| .flake8 | ||

| .gitignore | ||

| .gitmodules | ||

| .hadolint.yaml | ||

| .mailmap | ||

| .markdownlint.yml | ||

| .pre-commit-config.yaml | ||

| .rat-excludes | ||

| .readthedocs.yml | ||

| BREEZE.rst | ||

| CHANGELOG.txt | ||

| CI.rst | ||

| CODE_OF_CONDUCT.md | ||

| CONTRIBUTING.rst | ||

| CONTRIBUTORS_QUICK_START.rst | ||

| Dockerfile | ||

| Dockerfile.ci | ||

| IMAGES.rst | ||

| INSTALL | ||

| INTHEWILD.md | ||

| LICENSE | ||

| LOCAL_VIRTUALENV.rst | ||

| MANIFEST.in | ||

| NOTICE | ||

| PULL_REQUEST_WORKFLOW.rst | ||

| README.md | ||

| STATIC_CODE_CHECKS.rst | ||

| TESTING.rst | ||

| UPDATING.md | ||

| breeze | ||

| breeze-complete | ||

| codecov.yml | ||

| confirm | ||

| pylintrc | ||

| pyproject.toml | ||

| pytest.ini | ||

| setup.cfg | ||

| setup.py | ||

| yamllint-config.yml | ||

README.md

Apache Airflow

Apache Airflow (or simply Airflow) is a platform to programmatically author, schedule, and monitor workflows.

When workflows are defined as code, they become more maintainable, versionable, testable, and collaborative.

Use Airflow to author workflows as directed acyclic graphs (DAGs) of tasks. The Airflow scheduler executes your tasks on an array of workers while following the specified dependencies. Rich command line utilities make performing complex surgeries on DAGs a snap. The rich user interface makes it easy to visualize pipelines running in production, monitor progress, and troubleshoot issues when needed.

Table of contents

- Project Focus

- Principles

- Requirements

- Support for Python versions

- Getting started

- Installing from PyPI

- Official source code

- Convenience packages

- User Interface

- Contributing

- Who uses Apache Airflow?

- Who Maintains Apache Airflow?

- Can I use the Apache Airflow logo in my presentation?

- Airflow merchandise

- Links

Project Focus

Airflow works best with workflows that are mostly static and slowly changing. When DAG structure is similar from one run to the next, it allows for clarity around unit of work and continuity. Other similar projects include Luigi, Oozie and Azkaban.

Airflow is commonly used to process data, but has the opinion that tasks should ideally be idempotent (i.e. results of the task will be the same, and will not create duplicated data in a destination system), and should not pass large quantities of data from one task to the next (though tasks can pass metadata using Airflow's Xcom feature). For high-volume, data-intensive tasks, a best practice is to delegate to external services that specialize on that type of work.

Airflow is not a streaming solution, but it is often used to process real-time data, pulling data off streams in batches.

Principles

- Dynamic: Airflow pipelines are configuration as code (Python), allowing for dynamic pipeline generation. This allows for writing code that instantiates pipelines dynamically.

- Extensible: Easily define your own operators, executors and extend the library so that it fits the level of abstraction that suits your environment.

- Elegant: Airflow pipelines are lean and explicit. Parameterizing your scripts is built into the core of Airflow using the powerful Jinja templating engine.

- Scalable: Airflow has a modular architecture and uses a message queue to orchestrate an arbitrary number of workers.

Requirements

Apache Airflow is tested with:

| Master version (dev) | Stable version (2.0.0) | Previous version (1.10.14) | |

|---|---|---|---|

| Python | 3.6, 3.7, 3.8 | 3.6, 3.7, 3.8 | 2.7, 3.5, 3.6, 3.7, 3.8 |

| PostgreSQL | 9.6, 10, 11, 12, 13 | 9.6, 10, 11, 12, 13 | 9.6, 10, 11, 12, 13 |

| MySQL | 5.7, 8 | 5.7, 8 | 5.6, 5.7 |

| SQLite | 3.15.0+ | 3.15.0+ | 3.15.0+ |

| Kubernetes | 1.16.9, 1.17.5, 1.18.6 | 1.16.9, 1.17.5, 1.18.6 | 1.16.9, 1.17.5, 1.18.6 |

Note: MySQL 5.x versions are unable to or have limitations with running multiple schedulers -- please see the "Scheduler" docs. MariaDB is not tested/recommended.

Note: SQLite is used in Airflow tests. Do not use it in production. We recommend using the latest stable version of SQLite for local development.

Support for Python versions

As of Airflow 2.0 we agreed to certain rules we follow for Python support. They are based on the official release schedule of Python, nicely summarized in the Python Developer's Guide

-

We finish support for python versions when they reach EOL (For python 3.6 it means that we will remove it from being supported on 23.12.2021).

-

The "oldest" supported version of Python is the default one. "Default" is only meaningful in terms of "smoke tests" in CI PRs which are run using this default version.

-

We support a new version of Python after it is officially released, as soon as we manage to make it works in our CI pipeline (which might not be immediate) and release a new version of Airflow (non-Patch version) based on this CI set-up.

Additional notes on Python version requirements

- Previous version requires at least Python 3.5.3 when using Python 3

Getting started

Visit the official Airflow website documentation (latest stable release) for help with installing Airflow, getting started, or walking through a more complete tutorial.

Note: If you're looking for documentation for master branch (latest development branch): you can find it on s.apache.org/airflow-docs.

For more information on Airflow's Roadmap or Airflow Improvement Proposals (AIPs), visit the Airflow Wiki.

Official Docker (container) images for Apache Airflow are described in IMAGES.rst.

Installing from PyPI

We publish Apache Airflow as apache-airflow package in PyPI. Installing it however might be sometimes tricky

because Airflow is a bit of both a library and application. Libraries usually keep their dependencies open and

applications usually pin them, but we should do neither and both at the same time. We decided to keep

our dependencies as open as possible (in setup.py) so users can install different versions of libraries

if needed. This means that from time to time plain pip install apache-airflow will not work or will

produce unusable Airflow installation.

In order to have repeatable installation, however, introduced in Airflow 1.10.10 and updated in

Airflow 1.10.12 we also keep a set of "known-to-be-working" constraint files in the

orphan constraints-master, constraints-2-0 and constraints-1-10 branches. We keep those "known-to-be-working"

constraints files separately per major/minor python version.

You can use them as constraint files when installing Airflow from PyPI. Note that you have to specify

correct Airflow tag/version/branch and python versions in the URL.

- Installing just Airflow:

NOTE!!!

On November 2020, new version of PIP (20.3) has been released with a new, 2020 resolver. This resolver

does not yet work with Apache Airflow and might lead to errors in installation - depends on your choice

of extras. In order to install Airflow you need to either downgrade pip to version 20.2.4

pip install --upgrade pip==20.2.4 or, in case you use Pip 20.3, you need to add option

--use-deprecated legacy-resolver to your pip install command.

pip install apache-airflow==2.0.0 \

--constraint "https://raw.githubusercontent.com/apache/airflow/constraints-2.0.0/constraints-3.7.txt"

- Installing with extras (for example postgres,google)

pip install apache-airflow[postgres,google]==2.0.0 \

--constraint "https://raw.githubusercontent.com/apache/airflow/constraints-2.0.0/constraints-3.7.txt"

For information on installing backport providers check backport-providers.rst.

Official source code

Apache Airflow is an Apache Software Foundation (ASF) project, and our official source code releases:

- Follow the ASF Release Policy

- Can be downloaded from the ASF Distribution Directory

- Are cryptographically signed by the release manager

- Are officially voted on by the PMC members during the Release Approval Process

Following the ASF rules, the source packages released must be sufficient for a user to build and test the release provided they have access to the appropriate platform and tools.

Convenience packages

There are other ways of installing and using Airflow. Those are "convenience" methods - they are

not "official releases" as stated by the ASF Release Policy, but they can be used by the users

who do not want to build the software themselves.

Those are - in the order of most common ways people install Airflow:

- PyPI releases to install Airflow using standard

piptool - Docker Images to install airflow via

dockertool, use them in Kubernetes, Helm Charts,docker-compose,docker swarmetc. You can read more about using, customising, and extending the images in the Latest docs, and learn details on the internals in the IMAGES.rst document. - Tags in GitHub to retrieve the git project sources that were used to generate official source packages via git

All those artifacts are not official releases, but they are prepared using officially released sources. Some of those artifacts are "development" or "pre-release" ones, and they are clearly marked as such following the ASF Policy.

User Interface

-

DAGs: Overview of all DAGs in your environment.

-

Tree View: Tree representation of a DAG that spans across time.

-

Graph View: Visualization of a DAG's dependencies and their current status for a specific run.

-

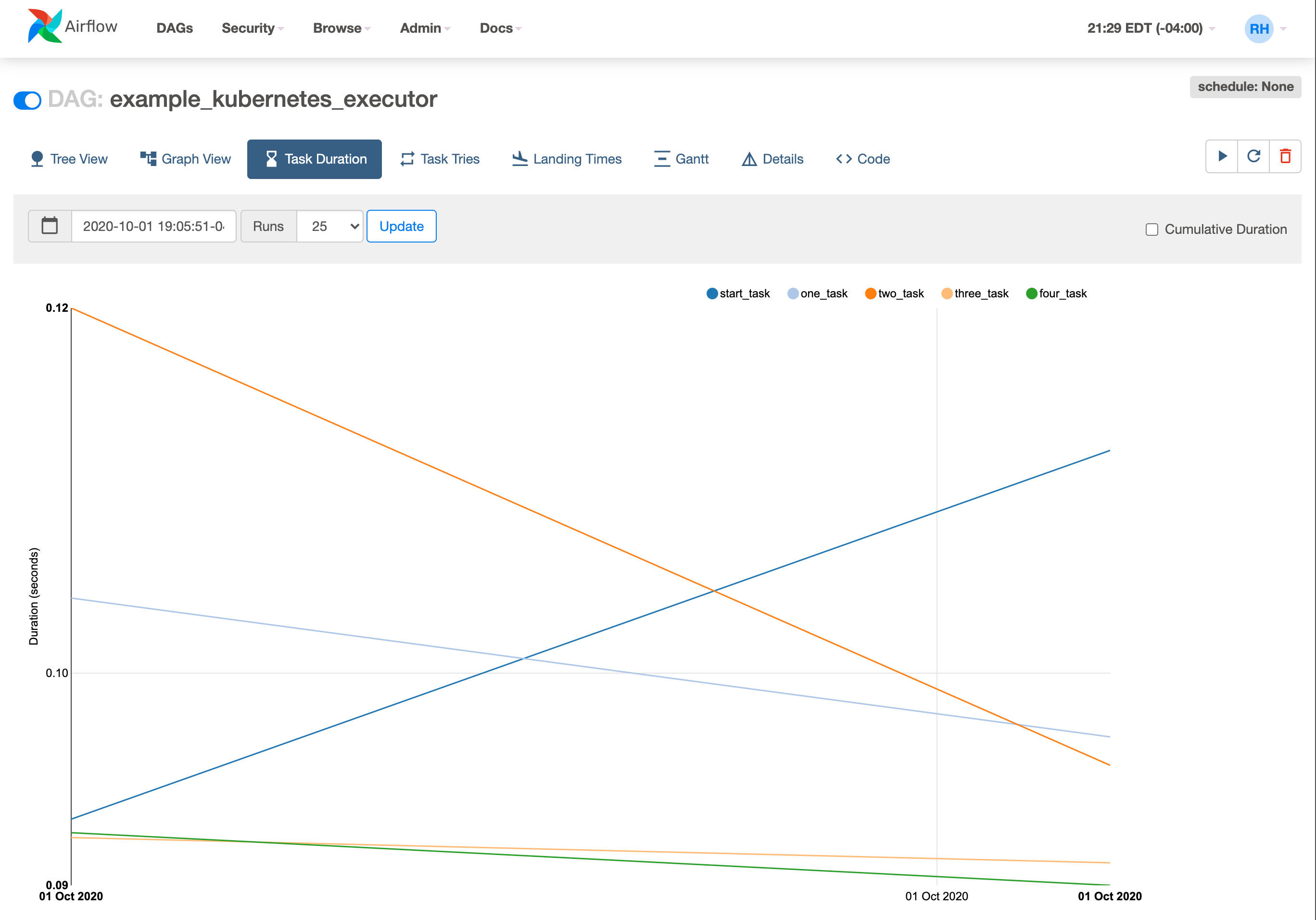

Task Duration: Total time spent on different tasks over time.

-

Gantt View: Duration and overlap of a DAG.

-

Code View: Quick way to view source code of a DAG.

Contributing

Want to help build Apache Airflow? Check out our contributing documentation.

Who uses Apache Airflow?

More than 350 organizations are using Apache Airflow in the wild.

Who Maintains Apache Airflow?

Airflow is the work of the community, but the core committers/maintainers are responsible for reviewing and merging PRs as well as steering conversation around new feature requests. If you would like to become a maintainer, please review the Apache Airflow committer requirements.

Can I use the Apache Airflow logo in my presentation?

Yes! Be sure to abide by the Apache Foundation trademark policies and the Apache Airflow Brandbook. The most up to date logos are found in this repo and on the Apache Software Foundation website.

Airflow merchandise

If you would love to have Apache Airflow stickers, t-shirt etc. then check out Redbubble Shop.