|

|

||

|---|---|---|

| .github | ||

| airflow | ||

| dags | ||

| dev | ||

| docs | ||

| scripts | ||

| tests | ||

| .coveragerc | ||

| .coveralls.yml | ||

| .gitignore | ||

| .landscape.yml | ||

| .rat-excludes | ||

| .travis.yml | ||

| CHANGELOG.txt | ||

| CONTRIBUTING.md | ||

| COPYRIGHT.txt | ||

| LICENSE.txt | ||

| MANIFEST.in | ||

| README.md | ||

| TODO.md | ||

| UPDATING.md | ||

| init.sh | ||

| migrations.sql | ||

| run_tox.sh | ||

| run_unit_tests.sh | ||

| setup.cfg | ||

| setup.py | ||

| tox.ini | ||

README.md

Airflow

Airflow is a platform to programmatically author, schedule and monitor workflows.

When workflows are defined as code, they become more maintainable, versionable, testable, and collaborative.

Use Airflow to author workflows as directed acyclic graphs (DAGs) of tasks. The Airflow scheduler executes your tasks on an array of workers while following the specified dependencies. Rich command line utilities make performing complex surgeries on DAGs a snap. The rich user interface makes it easy to visualize pipelines running in production, monitor progress, and troubleshoot issues when needed.

Getting started

Please visit the Airflow Platform documentation for help with installing Airflow, getting a quick start, or a more complete tutorial.

For further information, please visit the Airflow Wiki.

Beyond the Horizon

Airflow is not a data streaming solution. Tasks do not move data from one to the other (though tasks can exchange metadata!). Airflow is not in the Spark Streaming or Storm space, it is more comparable to Oozie or Azkaban.

Workflows are expected to be mostly static or slowly changing. You can think of the structure of the tasks in your workflow as slightly more dynamic than a database structure would be. Airflow workflows are expected to look similar from a run to the next, this allows for clarity around unit of work and continuity.

Principles

- Dynamic: Airflow pipelines are configuration as code (Python), allowing for dynamic pipeline generation. This allows for writing code that instantiates pipelines dynamically.

- Extensible: Easily define your own operators, executors and extend the library so that it fits the level of abstraction that suits your environment.

- Elegant: Airflow pipelines are lean and explicit. Parameterizing your scripts is built into the core of Airflow using the powerful Jinja templating engine.

- Scalable: Airflow has a modular architecture and uses a message queue to orchestrate an arbitrary number of workers. Airflow is ready to scale to infinity.

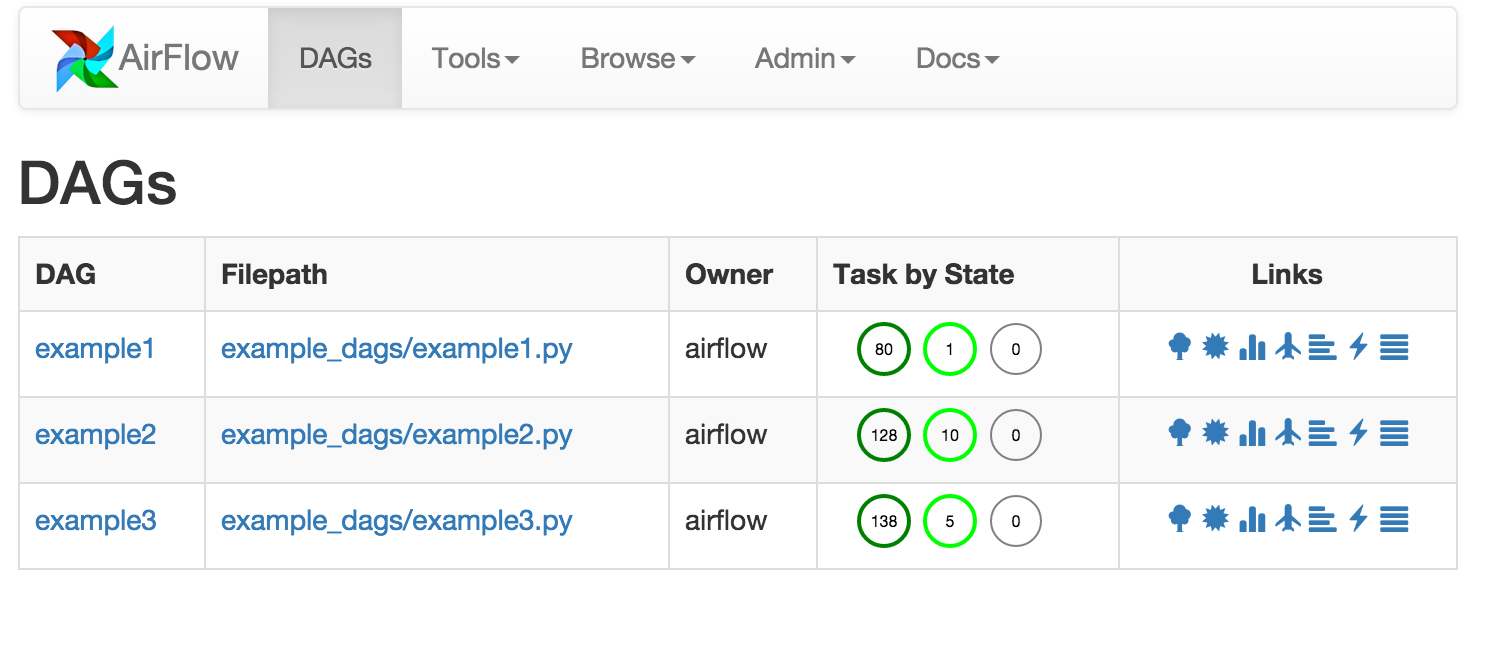

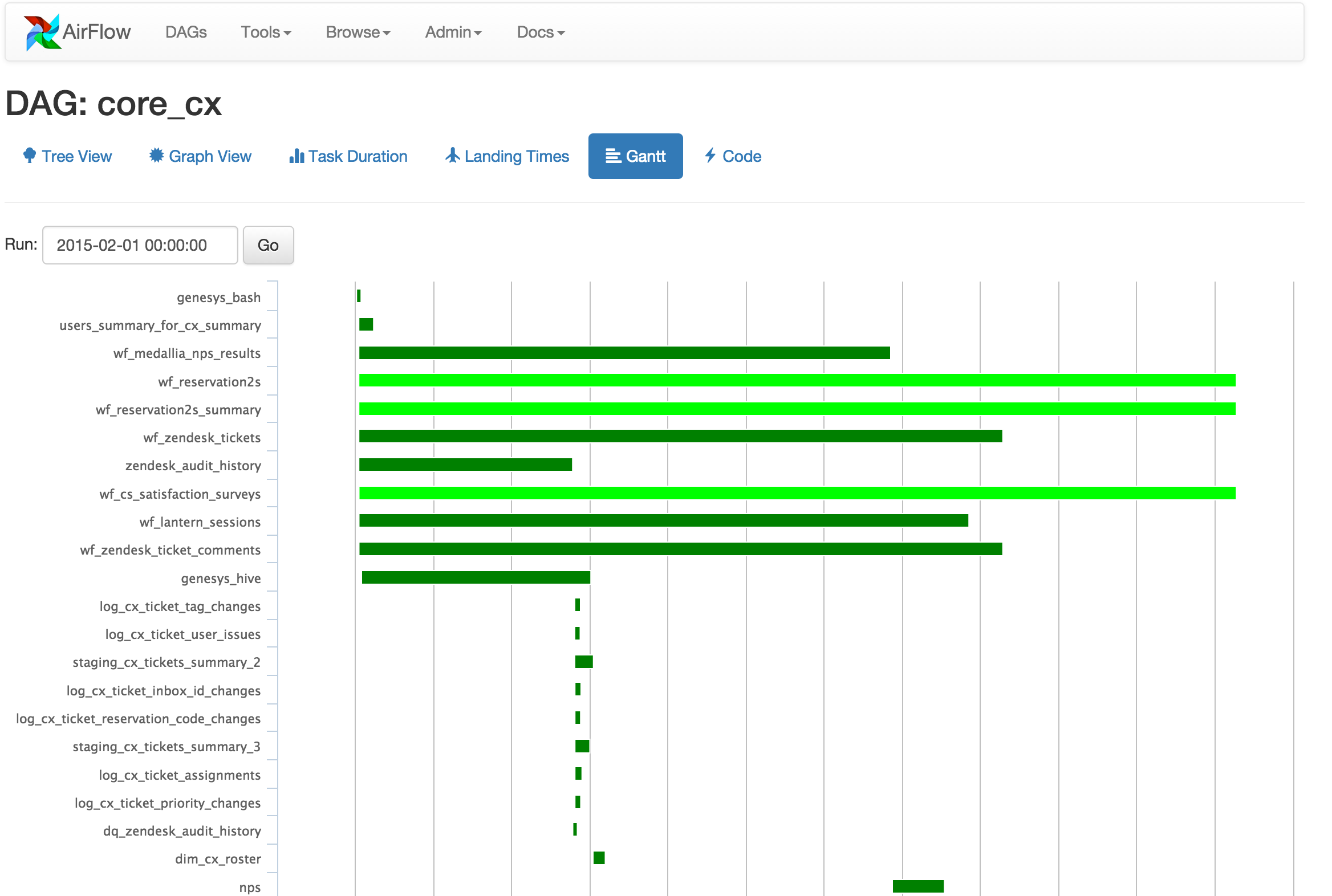

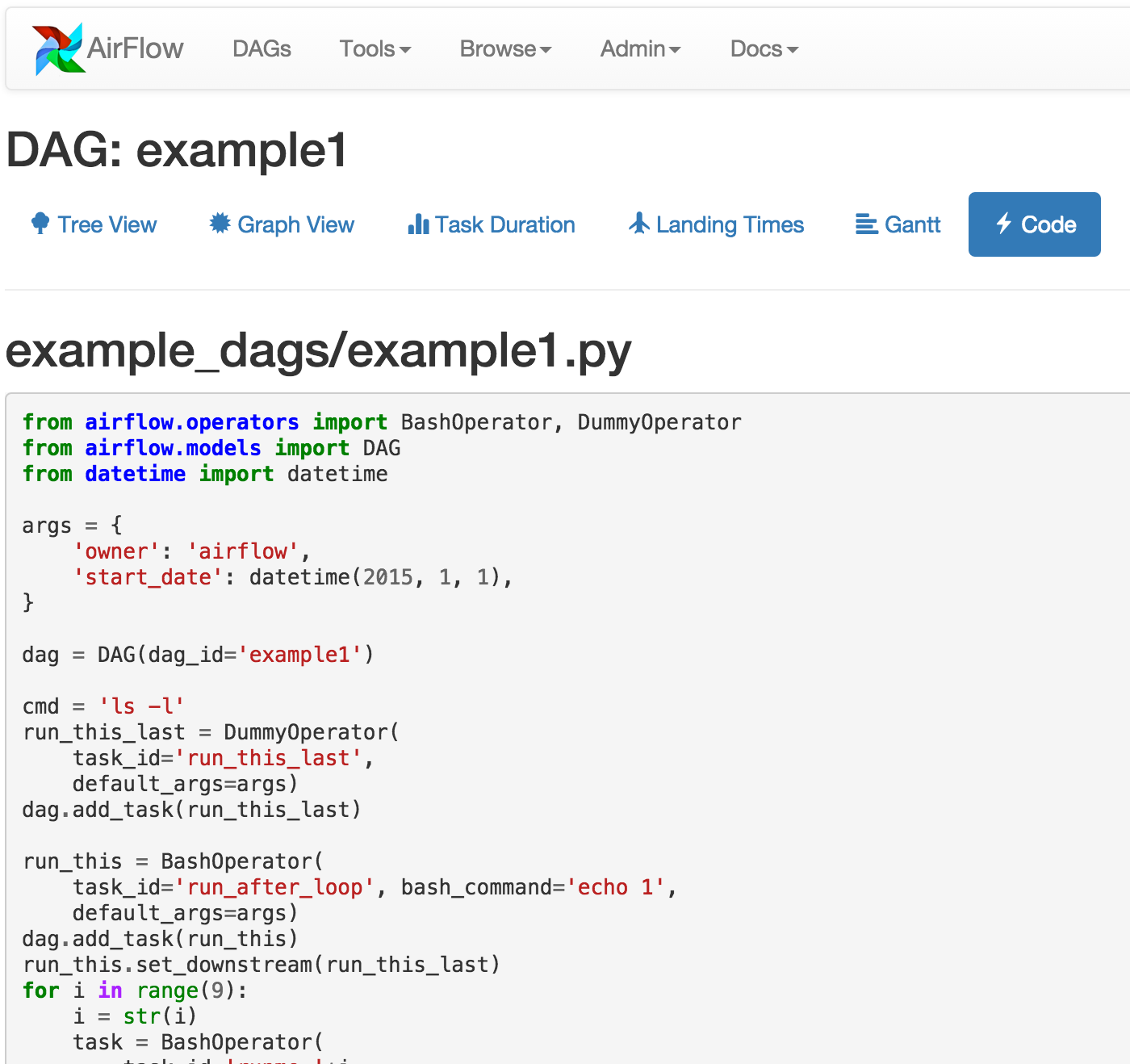

User Interface

-

Tree View: Tree representation of a DAG that spans across time.

-

Graph View: Visualization of a DAG's dependencies and their current status for a specific run.

-

Task Duration: Total time spent on different tasks over time.

Who uses Airflow?

As the Airflow community grows, we'd like to keep track of who is using the platform. Please send a PR with your company name and @githubhandle if you may.

Committers:

- Refer to Committers

Currently officially using Airflow:

- Airbnb [@mistercrunch, @artwr]

- [Agari] (https://github.com/agaridata) [@r39132]

- allegro.pl [@kretes]

- Bellhops

- BlueApron [@jasonjho & @matthewdavidhauser]

- [Clover Health] (https://www.cloverhealth.com) [@gwax & @vansivallab]

- Chartboost [@cgelman & @dclubb]

- Cotap [@maraca & @richardchew]

- Easy Taxi [@caique-lima]

- FreshBooks [@DinoCow]

- Gentner Lab [@neuromusic]

- Glassdoor [@syvineckruyk]

- Handy [@marcintustin / @mtustin-handy]

- Holimetrix [@thibault-ketterer]

- Hootsuite

- ING

- Jampp

- Kiwi.com [@underyx]

- Kogan.com [@geeknam]

- LendUp [@lendup]

- LingoChamp [@haitaoyao]

- Lucid [@jbrownlucid & @kkourtchikov]

- Lyft[@SaurabhBajaj]

- Nerdwallet

- Sense360 [@kamilmroczek]

- Sidecar [@getsidecar]

- SimilarWeb [@similarweb]

- SmartNews [@takus]

- Stripe [@jbalogh]

- Thumbtack [@natekupp]

- WeTransfer [@jochem]

- Wooga

- Xoom [@gepser & @omarvides]

- WePay [@criccomini & @mtagle]

- Yahoo!

- Zendesk

Links

- Full documentation on pythonhosted.org

- Airflow Apache (incubating) (mailing list)

- Airbnb Blog Post about Airflow

- Airflow Common Pitfalls

- Hadoop Summit Airflow Video

- Airflow at Agari Blog Post

- Best practices with Airflow (Max) nov 2015

- Airflow (Lesson 1) : TriggerDagRunOperator

- Docker Airflow (externally maintained)

- Airflow: Tips, Tricks, and Pitfalls @ Handy

- Airflow Chef recipe (community contributed) [github] (https://github.com/bahchis/airflow-cookbook) [chef] (https://supermarket.chef.io/cookbooks/airflow)

- Airflow Puppet Module (community contributed) [github] (https://github.com/similarweb/puppet-airflow) [puppet forge] (https://forge.puppetlabs.com/similarweb/airflow)

- Gitter (live chat) Channel