added to currently **officially** using Airflow: [California Data Collaborative](http://californiad atacollaborative.org) powered by [ARGO Labs](http://www.argolabs.org) Dear Airflow maintainers, Please accept this PR. I understand that it will not be reviewed until I have checked off all the steps below! - [x] My PR addresses the following [Airflow JIRA] **https://issues.apache.org/jira/browse/AIRFLOW-13 84** - The California Data Collaborative is a unique coalition of forward thinking municipal water managers in California who along with ARGO, a startup non-profit that builds, operates, and maintains data infrastructures, are pioneering new standards of collaborating around and administering water data for millions Californians. ARGO has deployed a hosted version of Airflow on AWS and it is used to orchestrate data pipelines to parse water use data from participating utilities to power analytics. Furthermore, ARGO also uses Airflow to power a data infrastructure for citywide street maintenance via https://github.com/ARGO-SQUID - [x] My PR adds the following unit tests __OR__ does not need testing for this extremely good reason: Change to README.md does not require unit testing. - [x] My commits all reference JIRA issues in their subject lines, and I have squashed multiple commits if they address the same issue. In addition, my commits follow the guidelines from "[How to write a good git commit message](http://chris.beams.io/posts/git- commit/)": 1. Subject is separated from body by a blank line 2. Subject is limited to 50 characters 3. Subject does not end with a period 4. Subject uses the imperative mood ("add", not "adding") 5. Body wraps at 72 characters 6. Body explains "what" and "why", not "how" Update README.md added to currently **officially** using Airflow section of README.md [California Data Collaborative](https://github.com /California-Data-Collaborative) powered by [ARGO Labs](http://www.argolabs.org) Added CaDC/ARGO Labs to README.md Please consider adding [Argo Labs](www.argolabs.org) to the Airflow users section. **Context** - The California Data Collaborative is a unique coalition of forward thinking municipal water managers in California who along with ARGO, a startup non-profit that builds, operates, and maintains data infrastructures, are pioneering new standards of collaborating around and administering water data for millions Californians. - ARGO has deployed a hosted version of Airflow on AWS and it is used to orchestrate data pipelines to parse water use data from participating utilities to power analytics. Furthermore, ARGO also uses Airflow to power a data infrastructure for citywide street maintenance via https://github.com/ARGO-SQUID Closes #2421 from vr00n/patch-3 |

||

|---|---|---|

| .github | ||

| airflow | ||

| dags | ||

| dev | ||

| docs | ||

| licenses | ||

| scripts | ||

| tests | ||

| .codecov.yml | ||

| .coveragerc | ||

| .editorconfig | ||

| .gitignore | ||

| .landscape.yml | ||

| .rat-excludes | ||

| .readthedocs.yml | ||

| .travis.yml | ||

| CHANGELOG.txt | ||

| CONTRIBUTING.md | ||

| DISCLAIMER | ||

| LICENSE | ||

| MANIFEST.in | ||

| NOTICE | ||

| README.md | ||

| TODO.md | ||

| UPDATING.md | ||

| init.sh | ||

| migrations.sql | ||

| run_tox.sh | ||

| run_unit_tests.sh | ||

| setup.cfg | ||

| setup.py | ||

| tox.ini | ||

README.md

Airflow

NOTE: The transition from 1.8.0 (or before) to 1.8.1 (or after) requires uninstalling Airflow before installing the new version. The package name was changed from airflow to apache-airflow as of version 1.8.1.

Airflow is a platform to programmatically author, schedule and monitor workflows.

When workflows are defined as code, they become more maintainable, versionable, testable, and collaborative.

Use Airflow to author workflows as directed acyclic graphs (DAGs) of tasks. The Airflow scheduler executes your tasks on an array of workers while following the specified dependencies. Rich command line utilities make performing complex surgeries on DAGs a snap. The rich user interface makes it easy to visualize pipelines running in production, monitor progress, and troubleshoot issues when needed.

Getting started

Please visit the Airflow Platform documentation for help with installing Airflow, getting a quick start, or a more complete tutorial.

For further information, please visit the Airflow Wiki.

Beyond the Horizon

Airflow is not a data streaming solution. Tasks do not move data from one to the other (though tasks can exchange metadata!). Airflow is not in the Spark Streaming or Storm space, it is more comparable to Oozie or Azkaban.

Workflows are expected to be mostly static or slowly changing. You can think of the structure of the tasks in your workflow as slightly more dynamic than a database structure would be. Airflow workflows are expected to look similar from a run to the next, this allows for clarity around unit of work and continuity.

Principles

- Dynamic: Airflow pipelines are configuration as code (Python), allowing for dynamic pipeline generation. This allows for writing code that instantiates pipelines dynamically.

- Extensible: Easily define your own operators, executors and extend the library so that it fits the level of abstraction that suits your environment.

- Elegant: Airflow pipelines are lean and explicit. Parameterizing your scripts is built into the core of Airflow using the powerful Jinja templating engine.

- Scalable: Airflow has a modular architecture and uses a message queue to orchestrate an arbitrary number of workers. Airflow is ready to scale to infinity.

User Interface

-

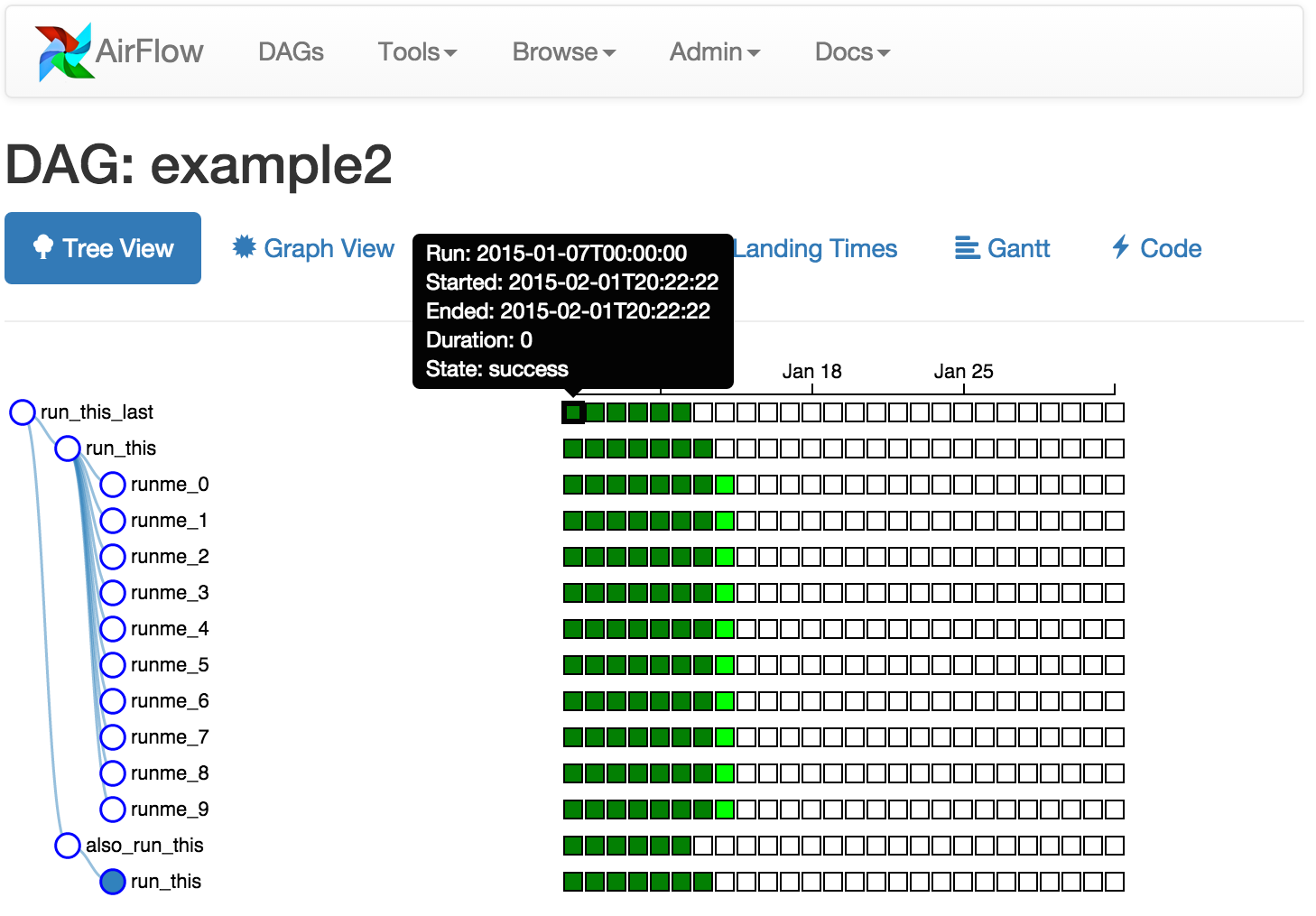

Tree View: Tree representation of a DAG that spans across time.

-

Graph View: Visualization of a DAG's dependencies and their current status for a specific run.

-

Task Duration: Total time spent on different tasks over time.

Who uses Airflow?

As the Airflow community grows, we'd like to keep track of who is using the platform. Please send a PR with your company name and @githubhandle if you may.

Committers:

- Refer to Committers

Currently officially using Airflow:

- Airbnb [@mistercrunch, @artwr]

- Agari [@r39132]

- allegro.pl [@kretes]

- AltX [@pedromduarte]

- Apigee [@btallman]

- ARGO Labs [California Data Collaborative]

- Astronomer [@schnie]

- Auth0 [@sicarul]

- BandwidthX [@dineshdsharma]

- Bellhops

- BlaBlaCar [@puckel & @wmorin]

- Bloc [@dpaola2]

- BlueApron [@jasonjho & @matthewdavidhauser]

- Blue Yonder [@blue-yonder]

- California Data Collaborative powered by ARGO Labs

- Celect [@superdosh & @chadcelect]

- Change.org [@change, @vijaykramesh]

- Checkr [@tongboh]

- Children's Hospital of Philadelphia Division of Genomic Diagnostics [@genomics-geek]

- City of San Diego [@MrMaksimize, @andrell81 & @arnaudvedy]

- Clairvoyant @shekharv

- Clover Health [@gwax & @vansivallab]

- Chartboost [@cgelman & @dclubb]

- Cotap [@maraca & @richardchew]

- Credit Karma [@preete-dixit-ck & @harish-gaggar-ck & @greg-finley-ck]

- DataFox [@sudowork]

- Digital First Media [@duffn & @mschmo & @seanmuth]

- Drivy [@AntoineAugusti]

- Easy Taxi [@caique-lima & @WesleyBatista]

- eRevalue [@hamedhsn]

- evo.company [@orhideous]

- FreshBooks [@DinoCow]

- Gentner Lab [@neuromusic]

- Glassdoor [@syvineckruyk]

- GovTech GDS [@chrissng & @datagovsg]

- Grand Rounds [@richddr, @timz1290 & @wenever]

- Groupalia [@jesusfcr]

- Gusto [@frankhsu]

- Handshake [@mhickman]

- Handy [@marcintustin / @mtustin-handy]

- HBO[@yiwang]

- HelloFresh [@tammymendt & @davidsbatista & @iuriinedostup]

- Holimetrix [@thibault-ketterer]

- Hootsuite

- IFTTT [@apurvajoshi]

- iHeartRadio[@yiwang]

- imgix [@dclubb]

- ING

- Jampp

- Kiwi.com [@underyx]

- Kogan.com [@geeknam]

- Lemann Foundation [@fernandosjp]

- LendUp [@lendup]

- LetsBonus [@jesusfcr & @OpringaoDoTurno]

- liligo [@tromika]

- LingoChamp [@haitaoyao]

- Lucid [@jbrownlucid & @kkourtchikov]

- Lumos Labs [@rfroetscher & @zzztimbo]

- Lyft[@SaurabhBajaj]

- Madrone [@mbreining & @scotthb]

- Markovian [@al-xv, @skogsbaeck, @waltherg]

- Mercadoni [@demorenoc]

- Mercari [@yu-iskw]

- MiNODES [@dice89, @diazcelsa]

- MFG Labs

- mytaxi [@mytaxi]

- Nerdwallet

- New Relic [@marcweil]

- Nextdoor [@SivaPandeti, @zshapiro & @jthomas123]

- OfferUp

- OneFineStay [@slangwald]

- Open Knowledge International @vitorbaptista

- Pandora Media [@Acehaidrey]

- PayPal [@jhsenjaliya]

- Postmates [@syeoryn]

- Pronto Tools [@zkan & @mesodiar]

- Qubole [@msumit]

- Quora

- Robinhood [@vineet-rh]

- Scaleway [@kdeldycke]

- Sense360 [@kamilmroczek]

- Shopkick [@shopkick]

- Sidecar [@getsidecar]

- SimilarWeb [@similarweb]

- SmartNews [@takus]

- Spotify [@znichols]

- Stackspace

- Stripe [@jbalogh]

- Tails.com [@alanmcruickshank]

- Thumbtack [@natekupp]

- Tictail

- T2 Systems [@unclaimedpants]

- United Airlines [@ilopezfr]

- Vente-Exclusive.com [@alexvanboxel]

- Vnomics [@lpalum]

- WePay [@criccomini & @mtagle]

- WeTransfer [@jochem]

- Whistle Labs [@ananya77041]

- WiseBanyan

- Wooga

- Xoom [@gepser & @omarvides]

- Yahoo!

- Zapier [@drknexus & @statwonk]

- Zendesk

- Zenly [@cerisier & @jbdalido]

- Zymergen

- 99 [@fbenevides, @gustavoamigo & @mmmaia]